Every month, on the third Tuesday, at MADIC dynamics we will be presenting a Knowledge Hub Webinar on Microsoft Dynamics 365 Business Central and related products; I will be presenting some of the webinars, but other consultants will be getting involved as well, so there will be a variety of presenters through time.

Every month, on the third Tuesday, at MADIC dynamics we will be presenting a Knowledge Hub Webinar on Microsoft Dynamics 365 Business Central and related products; I will be presenting some of the webinars, but other consultants will be getting involved as well, so there will be a variety of presenters through time.

We aim to have the next three monthly Knowledge Hub Webinars scheduled and details of them available. The next three webinars, starting next month, are:

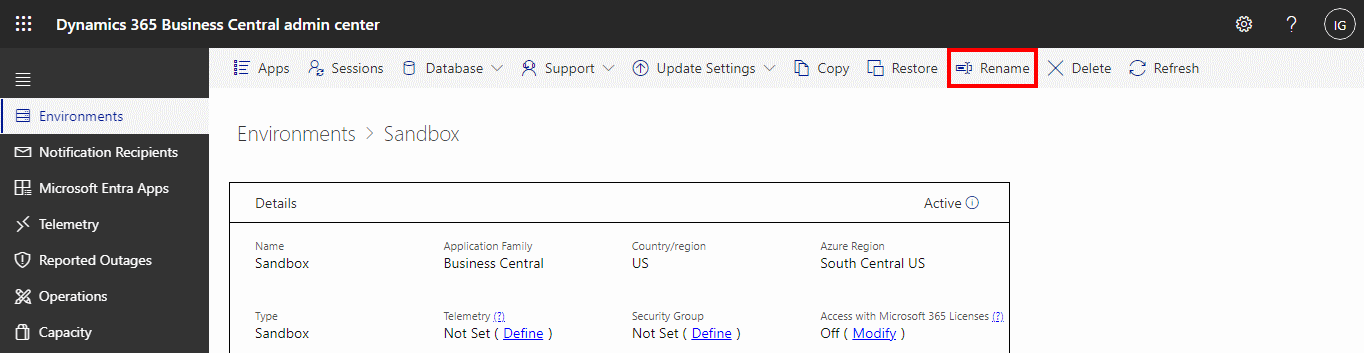

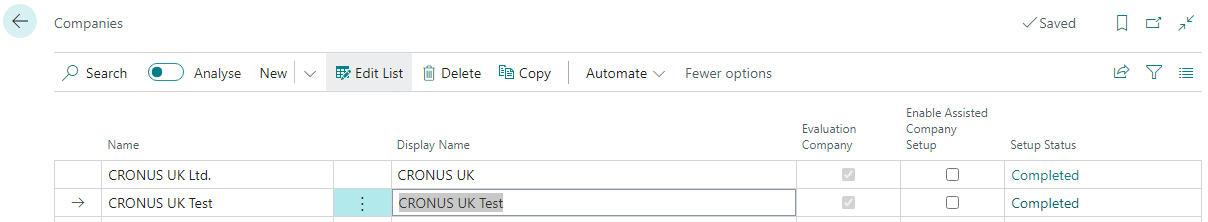

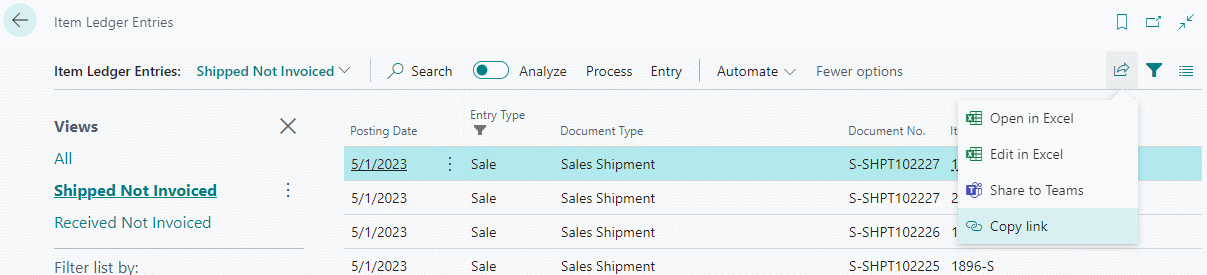

What’s New in Business Central 2024 Wave 1Tuesday, 21st May 2024 – 14:00-14:45

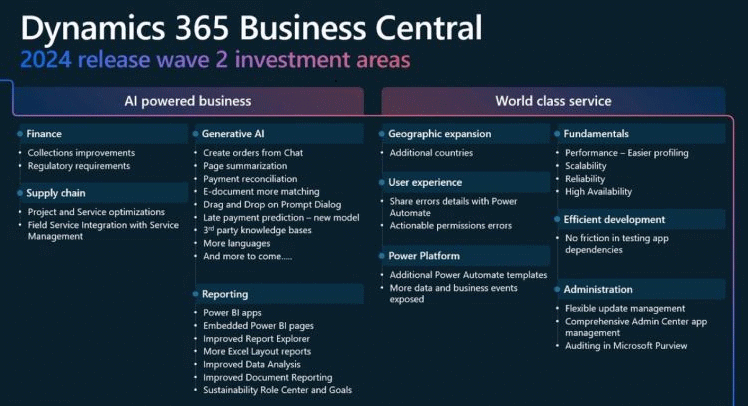

Discover the upcoming new features in Microsoft Dynamics 365 Business Central 2024 Wave 1. |

Upgrading (to) Business CentralTuesday, 18th June 2024 – 14:00-14:45

Learn the ins and outs of upgrading NAV to Business Central and the steps to upgrade to new versions of Business Central. |

Document Capture for Business CentralTuesday, 16th July 2024 – 14:00-14:45

Scan documents and create invoices in Business Central using Continia Document Capture. |